Claude Mythos and Anthropic's Pronouncements

As Queen sang, "Is this the real life, or is this just marketing?"

I wasn’t that concerned until I saw this tweet from Theranos founder Elizabeth Holmes.

Then I thought of Moira, from Schitt’s Creek:

This week, Anthropic announced Project Glasswing, “a new initiative that brings together Amazon Web Services, Anthropic, Apple, Broadcom, Cisco, CrowdStrike, Google, JPMorganChase, the Linux Foundation, Microsoft, NVIDIA, and Palo Alto Networks in an effort to secure the world’s most critical software.” (emphasis mine).

The best AI use case so far is in writing code. It’s fairly simple to see why: when code is written, it either runs or it doesn’t. There’s an immediate feedback loop. So the AI writes the code, can test it, and, if it doesn’t work, can iterate all near-autonomously.

Claude Code, Anthropic’s app for using AI to write code, is the industry-standard AI coding platform.

Powering Claude Code currently is Claude 4.6 Opus, the current best-in-class AI model for writing code. It’s significantly better than any other AI model on the market.

Anthropic trained a huge new AI model called Mythos that vastly outperforms Claude 4.6 Opus in all coding benchmarks.

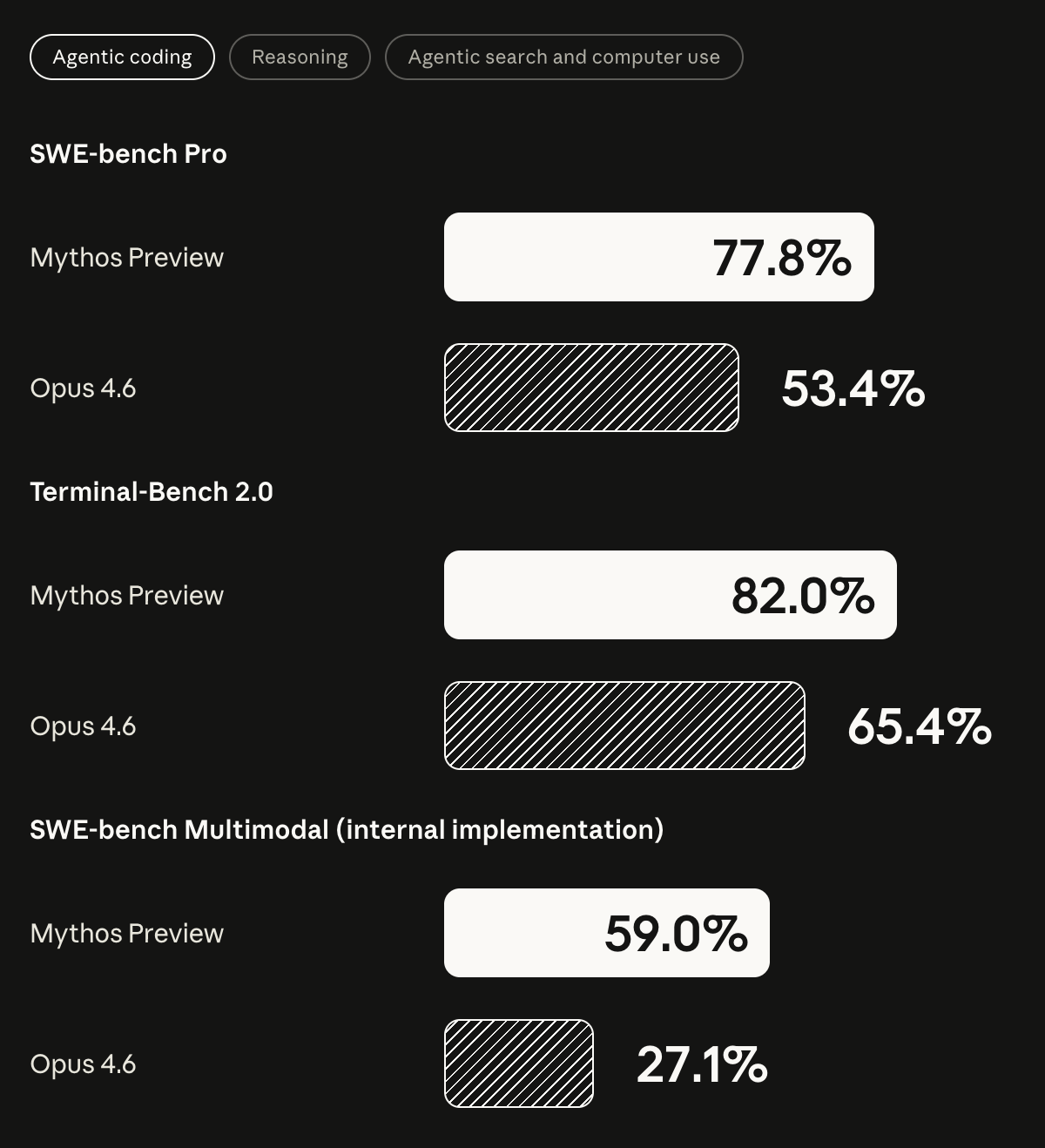

Mythos is amazing at coding. There are various objective benchmarks for code quality. In the past, a 2 or 3-point improvement over the current state of the art was enough to get all developers to switch over to the new model.

The current state-of-the-art coding AI model is Claude Opus 4.6. Mythos has outperformed the state of the art by 24%.

Mythos is so advanced that it found, allegedly, “thousands” of major security vulnerabilities across all major technology operating systems (think Mac, Windows, Linux) and web browsers, and core applications. These vulnerabilities are referred to as “zero-day” exploits meaning that the developers of the respective software didn’t know that the vulnerabilities existed.

Anthropic was so surprised at the results that they called industry-leaders and are creating a strike force to identify and resolve these critical technology platforms before AI models like this become widely available. They’ve made Mythos available to select enterprises, but they haven’t made it available to the public and “they may never.”

To summarize:

Anthropic trained the most advanced AI model in Claude Mythos.

Claude Mythos is 24% better than Claude Opus 4.6, the current state-of-the-art.

Anthropic used Claude Mythos to identify thousands of security vulnerabilities in critical software used every day.

Anthropic called industry leaders to create a strike force, Project Glasswing, to secure these critical pieces of software.

Anthropic decides to publish a huge white paper detailing Mythos, announcing the project, and proclaiming to the world that these vulnerabilities haven’t been solved yet but will.

Anthropic also publishes the pricing for Mythos, which is 5X higher than that of Opus 4.6

I, for one, am glad that Anthropic has created this initiative and is taking this seriously. I’m glad that investors gave them the money to do these tests. I’m glad that US administrations over the last 10-15 years have allowed the AI sector to flourish.

It’s not the actions they’re taking that I’m bothered by. It’s the fact that they published all this before fixing the critical vulnerabilities and not after.

Anthropic has a history of this type of sky-is-falling-marketing.

2 quick YouTube searches (“Dario Amodei AI jobs” and “Dario Amodei AI safety”) brought up these and way more examples. 1 ChatGPT search (“Find me videos, tweets, etc where Dario, the CEO of Anthropic, claimed that AI would destroy all jobs or generally had sky-is-falling messaging”) yielded a whopping 82 links to Anthropic’s sky-is-falling messaging (Although it should be noted that I didn’t click them all so there surely is some overlap. You get the point.).

This has been par for the course for a while now. Anthropic was a company started by Open AI employees over fundamental disagreements of how AI should be handled. For example, the Anthropic founding team were at Open AI when they said GPT-2 was too dangerous to be released.

I think it’s incredibly hard to separate the reality (mission-critical technologies should be secured before western adversaries have access to AI models like this) and the marketing.

Am I supposed to thank Anthropic for being team-good-guys? Am I supposed to fear this AI falling into the hands of team-bad-guys? Or is this a really great test of wayyyy higher AI pricing?

And while everyone in Silicon Valley are losing their minds about the implications of Mythos, is it really just because 1 engineer + Claude Code, if Mythos is ever released, may replace 7 or 8 or 9 engineers out of 10?

I’ve stated in the past that I think the best industry to be in is AI and the second best is cybersecurity. That is seemingly more true than ever. But if AI can find the vulnerabilities, and there’s an expectation that it can help in fixing them, and it’s “dangerous” to release it to the public (according to Anthropic), then why publish this white paper before fixing them instead of after?

Much will be made about Mythos in the coming weeks. I hope it’s used to patch the vulnerabilities. It’s unquestionable that the United States will be stronger with 3 or 4 AI models this advanced, all owned by different countries.

But if it’s so dangerous, why make it a marketing campaign?